Category: Music

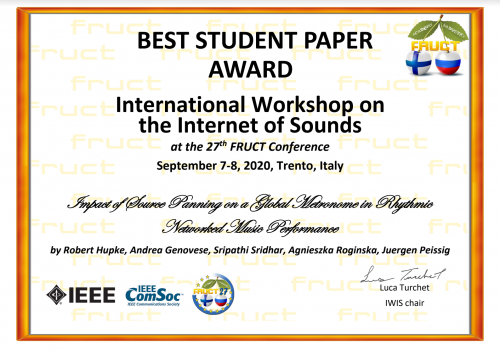

Best Student Paper Award at IWIS 2020

We were awarded the Best Student Paper Award by the 1st International Workshop on the Internet of Sounds, a new IEEE workshop hosted by the 27th FRUCT Conference. Thanks to the other authors from Leibniz University and NYU! (names on image). Publication will be posted soon.

Unearthing old projects #1 – A Virtual Surround Sound mix

A few years ago, I attended the 3D audio course at NYU Steinhardt taught by Dr. Roginska. This is the same course that I now have the honor to teach now in 2019.

One of the assignments for that course was to create a Virtual Surround Sound mix of a 5.1 regular multichannel mix. Virtual Surround Sound is a way to enjoy multi-speaker content as 3D audio binaural format over headphones. It works by virtualizing each channel-based track through HRTF digital filters representing the auditory directional response of locations matching a regular 5.1 convention.

To experience the 3D effect please listen using headphones!

1. Standard Stereo dowmix of 5.0 mix

2. 3D binaural VSS dowmix (use headphones)

For this assignment I used source material from MedleyDB (http://medleydb.weebly.com/description.html)

Generic HRTFs were used, from the MIT database (KEMAR dummy)

No additional reverberation was added since tracks were already processed with artificial reverb.

Each source was assigned 1 or 2 virtual positions along a 5.0 configuration

(If I were to do this again, I would choose more dry source material and add artificial reverb myself at the mastering stage. Pre-baked reverberation does not virtualize well with directional HRTFs since that part of the sound is supposedly diffused)

HoloDeck – Percussion Demo

… continuing from previous post

The creation of a believable mixed reality application often requires an attentive audiovisual calibration. For the non-initiated, mixed-reality (MR) aims to blend the real and virtual worlds into one dependent scene, where the digital elements adapt to the local boundaries that define our space. Most high-quality use-cases are now location-fixed and require the touch of an engineer or a design artist to tune and blend the elements together (but mobile augmented reality apps are now a common example for smartphones).

By using the material captured in the previous session, we decided to create a VR/MR collaborative demo, where a live percussionist would play along the pre-recorded musicians represented as digital character. In the same space, an audience member would then be able to observe and hear both the digital characters, and a live digital avatar of the real-time percussionist, through VR goggles.

The ultimate goal here is to provide to the audience the concurrent impression of both “being there” with the musicians, and them “being here” with the observer. In other words, a coherent cognitive space needs to subjectively exist for the participants to feel compelled by the experience.

To make our digital characters, we imported the motion capture data from Motive into Maya to rig a skeleton figure. That skeleton was then used to animate whatever digital asset we wanted to test in Unity. Our djembe percussion trio was thus transformed into sort of video-game animations.

Our next step was to choose an appropriate demo location, we chose the NYU Future Reality Lab, who are also participants to the Holodeck project, since they were able to provide us with a digital recreation of that same environment for an occluded VR headset implementation. This is important because there is always a relation between the auditory and visual component of a mixed-reality scene, the presence of one creates the expectation of the other, our brains need cohesiveness to subconsciously create a sense of reality. Since the audience is subject to the sound character of a real percussionist placed a few feet away, we need to show a digital environment which comes close to an expected scenario able to produce that acoustic character. In our workflow, the auditory sense defined the visual element needed to justify itself.

After an audiovisual synching process, we used SteamVR as the dynamic spatial audio engine to create localizable point-sound-sources from the spot-microphones’ captures. To enhance the blend with the real drummer, we processed the dry object-audio material with an acoustic impulse response filter recorded at the listener’s location. This process effectively “transfers” the acoustic property of a room onto a signal recorded into a different one. The final mix was tuned through rehearsals, where we evaluated the blend between the live and recorded virtual drummers.

During the presentation, the live drummer was fitted with a mocap suit and live-rendered as an avatar. We then reproduced the pre-recorded playback to the observer and the musician. The observer was fitted with open-back headphones in order to be able to hear the local drummer too. See the outcome in the video below.

Game characters are animated using the pre-recorded motion capture and sound of a Djembe percussion trio and a dancer. During the presentation, a live, motion-capped musician, joins the performance becoming a real-time avatar (brighter color).

An audience member can observe the whole performance using a VR headset. The pre-recorded sound is processed with a dynamic spatial audio engine and acoustic filters to match the local acoustics. Sound in external view is from my phone (excuse bad quality)

Several future improvements are planned. Formal research investigations will follow using the paradigms involved in this pilot test. As far as music goes, more types of instrumentations and music should be tested, as well as more types of interactive combinations (e.g. all musicians in real-time, audience in a separate room etc.). Variations and modifications of these factors imply different sets of requirements and different possibilities.

HoloDeck – The future of interactive music

Recording and motion capturing an African djembe trio and a dancer

The HoloDeck computing network aims to prototype the digital collaborative interactions of the future. By setting up an audiovisual network of data exchange between calibrated rooms, we can do today what will be possible to do in 10 years from now with augmented and mixed reality technologies. We can thus study the social impact of remote digital immersive interaction for a vast number of applications.

One of the many applications of interest to us is music. How will remote music performance improve in the future? How can VR and AR be used to give a realistic impression to the musician and to the audience alike? Will we ever achieve an immersive digital experience comparable to real-life collaborative performance? In other words… can we make people create music remotely together and believe this is real? These are the questions that we are approaching by putting together this first iteration of our VR demo experience.

In order to collect material to use for our experiments, we recorded the sound and motion capture of an African percussion trio, plus a dancer (all members of the NYU percussion program). This material can later be used to make digital avatars that represent these musicians… sort of video-game characters. These avatar characters will eventually be used to provide a canvas in VR to test the response of additional musicians, dancers, and audience members. In these videos, we show the recording setup of 2 out of 3 drummers, and the dancer capturing.

Motive and Optitrack were used for the motion capture and ProTools for recording the sound. We tried to capture the different sound areas of the djembe in the most isolated way possible using spot-mics, in order to create audio objects easy to manipulate in a VR implementation. The motion tracking suits did require a little bit of posture adaptation for the performer’s drumming technique but the results were good nonetheless. For the dancer, we simply played back the lead-drum recording and mocapped the movements, in a future version we will try to capture the sound of the footsteps too.

Click here for 2nd part

Thanks also to:

Marta Gospodarek & Sripathi Sridhar

Performers:

Christopher Allen O’Leary, Max Meyer & Jared Shaw